Introduction

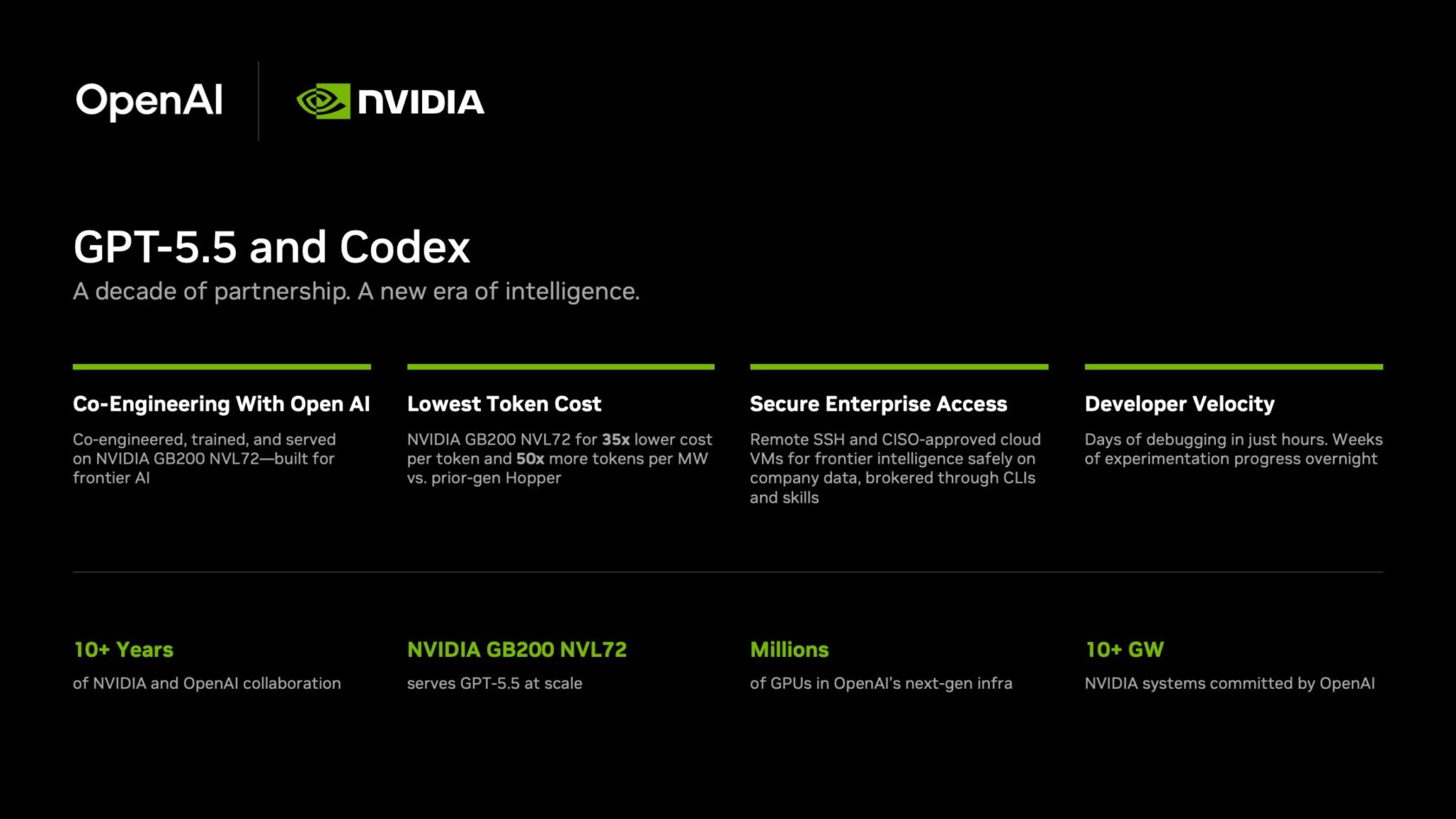

AI agents are transforming how development teams work, taking on complex tasks like debugging, experimentation, and shipping features. OpenAI's Codex, now powered by the latest GPT-5.5 model running on NVIDIA GB200 NVL72 rack-scale systems, brings this revolution to enterprise knowledge work. At NVIDIA, over 10,000 employees across engineering, product, legal, marketing, and more have achieved what they describe as "mind-blowing" and "life-changing" results. Debugging cycles that once took days now close in hours; experiments that needed weeks turn into overnight progress. This guide walks you through deploying a similar setup for your organization, leveraging GPT-5.5 and NVIDIA infrastructure while maintaining enterprise-grade security and auditability.

What You Need

- Access to OpenAI GPT-5.5 model (via licensing or partnership)

- NVIDIA GB200 NVL72 rack-scale systems (for optimal inference performance and cost efficiency)

- Codex application (OpenAI's agentic coding tool)

- Secure Shell (SSH) client for remote connections

- Cloud virtual machines (VMs) configured for your enterprise network

- Enterprise security policies including zero-data retention and read-only permissions

- Team training plan to encourage adoption across departments

Step-by-Step Deployment Guide

Step 1: Set Up NVIDIA GB200 NVL72 Infrastructure

Begin by deploying NVIDIA GB200 NVL72 rack-scale systems in your data center or cloud environment. These systems are designed to deliver 35x lower cost per million tokens and 50x higher token output per second per megawatt compared to previous-generation hardware. This makes frontier-model inference economically viable at enterprise scale. Work with your infrastructure team to procure and install the racks, ensuring they meet power and cooling requirements. For cloud-based deployments, coordinate with your cloud provider for dedicated instances.

Step 2: Deploy OpenAI GPT-5.5 Model

Once your NVIDIA hardware is ready, deploy the GPT-5.5 model on the GB200 NVL72 systems. Partner with OpenAI to obtain the model weights and appropriate licenses. Use NVIDIA's optimized inference stack (e.g., TensorRT-LLM) to maximize performance. Configure the model to serve requests with low latency and high throughput. Test the deployment with sample queries to verify that the model responds correctly and that token generation meets your performance benchmarks.

Step 3: Configure Codex Application with Enterprise Security

Now set up the Codex application to connect securely to your GPT-5.5 instance. Since Codex agents need dedicated environments, use Secure Shell (SSH) connections from the app to approved cloud virtual machines (VMs). Each VM acts as a sandbox where the agent can operate with full capabilities while maintaining auditability. Implement a zero-data retention policy – no data from prompts or outputs is stored by the platform. Agents should access production systems only with read-only permissions through command-line interfaces and the Skills agentic toolkit. This ensures that even if an agent makes an error, it cannot alter critical data.

Step 4: Integrate with Existing Workflows

After the security layer is in place, integrate Codex into your teams' daily workflows. For engineering teams, connect Codex to your code repositories and bug-tracking systems. Enable it to assist with debugging cycles that previously stretched across days – now they can close in hours. For experimentation, allow Codex to run overnight analyses on complex, multi-file codebases, turning weeks of work into overnight progress. For feature shipping, let teams generate end-to-end features from natural-language prompts, with higher reliability and fewer wasted cycles. Ensure that the Skills toolkit is configured to automate common tasks across departments like legal, finance, and HR.

Step 5: Train Teams and Encourage Adoption

Adoption is key to realizing the full benefits. Follow NVIDIA's example – CEO Jensen Huang sent a company-wide email urging everyone to use Codex, saying, "Let's jump to lightspeed. Welcome to the age of AI." Provide training sessions for each department, highlighting use cases relevant to their work. Create internal champions who can share their "mind-blowing" results. Monitor usage metrics to identify teams that are lagging and offer additional support. Encourage a culture where trying out Codex for various knowledge tasks becomes a daily habit.

Tips for Success

- Prioritize security first: Always enforce zero-data retention and read-only permissions. Regularly audit SSH connections and VM configurations.

- Leverage cost efficiency: The GB200 NVL72's economics make it feasible to run GPT-5.5 continuously. Monitor token usage and optimize prompts to stay within budget.

- Start small, scale fast: Begin with a pilot group of power users, then expand based on their feedback and success stories.

- Use internal champions: Identify early adopters who can demonstrate tangible productivity gains (e.g., debugging time reduced from days to hours).

- Integrate gradually: Don't overhaul your entire workflow at once. Introduce Codex for specific tasks like code review or data analysis before moving to more complex agentic workflows.

- Measure what matters: Track key performance indicators like time saved, features shipped, and employee satisfaction. Share these metrics company-wide to build momentum.