Meta’s WebRTC Modernization: Breaking Free from the Forking Trap

At Meta, real-time communication underpins countless experiences—from Messenger and Instagram video chats to low-latency Cloud Gaming and VR casting on Meta Quest. For years, the company relied on a customized, high-performance variant of the open-source WebRTC library. However, maintaining a permanent fork came with a hidden cost: the more Meta diverged from upstream, the harder it became to integrate community improvements. This is a common industry pitfall known as the “forking trap.” Recently, Meta completed a massive migration that escaped this cycle, moving over 50 use cases to a modular architecture built on the latest upstream WebRTC. In this Q&A, we explore how they accomplished this feat, the challenges they overcame, and the lasting benefits for performance and security.

What is the “forking trap” and why did Meta want to escape it?

The forking trap occurs when an organization forks a large open-source project—like WebRTC—to add custom features or quick fixes, but then fails to keep up with upstream updates. Over time, the internal fork drifts further from the community version, making it increasingly costly to merge new commits. At Meta, this meant missing out on critical performance improvements, security patches, and new capabilities from the WebRTC community. The divergence also created technical debt: the more features Meta built on its fork, the harder it became to align with upstream without breaking existing functionality. Escaping this trap required a systematic approach to re-introduce upstream WebRTC as a foundation while preserving Meta-specific optimizations. The goal was to benefit from community innovation while retaining control over proprietary modifications.

What was the core technical challenge in modernizing WebRTC at Meta?

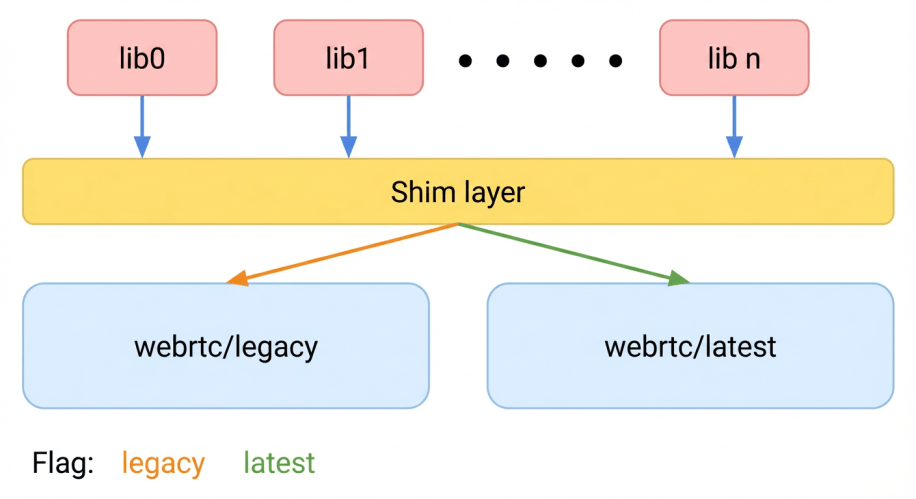

The primary challenge was upgrading WebRTC without disrupting services that serve billions of users. A one-time switch to the latest upstream version risked introducing regressions across diverse devices and environments. Meta needed a safe, incremental migration that allowed for rigorous A/B testing. However, the company’s monorepo and static linker constraints made it difficult to include two versions of the same library simultaneously. The C++ One Definition Rule (ODR) triggers symbol collisions when two versions of WebRTC are linked into the same binary. To solve this, Meta had to find a way to embed both the legacy fork and the new upstream version within the same address space, dynamically assigning users to either version for testing—all without inflating binary size or breaking the build graph.

How did Meta build a dual-stack architecture for A/B testing?

Meta’s solution was a dual-stack architecture that allowed two versions of WebRTC to coexist in the same application. They achieved this by wrapping each version in separate namespaces and carefully managing symbol visibility to avoid ODR violations. A dynamic routing layer, built into the real-time communication stack, could assign individual users or sessions to either the legacy fork or the latest upstream version. This enabled safe A/B testing across 50+ use cases—from Messenger calls to Cloud Gaming streams. The architecture also supported continuous upgrades: each new upstream release could be tested against a subset of users before a full rollout. If issues were detected, the system could quickly revert to the stable version without service interruption. This approach eliminated the need for a risky one-time migration and provided data-driven confidence for each upgrade.

What were the key workflows to stay continuously upgraded with upstream?

Meta established a continuous integration pipeline that automatically tracked the latest WebRTC upstream commits. When a new release was available, the team would build a version of the library with Meta’s proprietary components layered on top of the unmodified upstream skeleton. This “upstream core” was then tested in the dual-stack environment alongside the legacy fork. Performance, binary size, security, and latency metrics were compared across both versions. Only after passing rigorous A/B tests—on real user traffic—would the new version be promoted to a full rollout. The process also involved maintaining a clean patch set for Meta-specific features, ensuring they could be reapplied to each upstream update with minimal conflicts. This workflow turned upgrades from a painful manual effort into a routine, automated cycle, keeping Meta’s WebRTC continuously aligned with the community.

What performance and security benefits did Meta achieve?

By moving to the upstream-based modular architecture, Meta saw improvements in performance, binary size, and security. The upstream version incorporated optimizations from the broader WebRTC community, such as better codec handling, reduced latency, and more efficient memory usage. Binary size shrank because Meta could remove redundant code that had accumulated in the old fork. Security benefited from timely patches: critical vulnerabilities that were fixed upstream could be integrated quickly without waiting for manual backporting. Additionally, the dual-stack testing uncovered regressions early, preventing performance degradation from reaching end users. These gains were especially noticeable in resource-constrained environments like mobile devices and Meta Quest VR headsets. Overall, the modernization reduced maintenance overhead and allowed Meta to focus engineering effort on proprietary innovations rather than fighting with a diverging codebase.

How does this approach scale across 50+ use cases?

Meta’s solution was designed to be generic and reusable, not tied to any single product. The dual-stack architecture and continuous upgrade workflow were applied uniformly across 50+ use cases, covering video calls, cloud gaming, VR casting, and more. Each use case defined its own A/B testing parameters (e.g., session length, device type, region) but all shared the same underlying engine. The dynamic routing layer allowed per-session selection of WebRTC version, so different products could be migrated at their own pace. Metrics from all use cases fed into a central dashboard, giving engineers visibility into the impact of each upgrade across the entire portfolio. This scalability was possible because the architecture abstracted away the complexity of version coexistence, letting product teams focus on their specific real-time communication needs without worrying about the underlying library version conflicts.

What lessons can other organizations learn from Meta’s experience?

Meta’s journey offers several actionable lessons. First, avoid permanent forks of large open-source projects: they create hidden technical debt that grows exponentially over time. Instead, invest in a modular architecture that lets you keep a clean separation between upstream code and proprietary extensions. Second, prioritize safe rollout mechanisms like dual-stack A/B testing to de-risk upgrades—especially when serving billions of users. Third, automate the upgrade pipeline so that merging upstream becomes a routine, low-effort task. Finally, measure everything: binary size, latency, crash rates, and user experience metrics provide the data needed to validate each change. By following these principles, organizations can escape the forking trap and enjoy the best of both worlds: community innovation and custom optimization, without the maintenance nightmare.

Related Articles

- Rust Project Welcomes 13 Accepted Projects for Google Summer of Code 2026

- How to Detect and Recover from a GitHub Actions Compromise Targeting PyPI Packages

- License Plate Readers Used for Stalking: 14 Cases Expose Police Misuse

- How the Rust Project Selected Its Google Summer of Code 2026 Projects: A Step-by-Step Guide

- Funding Open Source Voices: Sovereign Tech Agency's New Standards Initiative

- 8 Key Highlights of Flutter 3.41: What Every Developer Should Know

- OpenClaw: After Hours – Your Guide to the Agentic Systems Event at GitHub HQ

- Lights, Camera, Open Source: 10 Insights into Documenting the Code Behind the Internet